Oct 2023

Meetings in Your Own Metaverse: How to Scan a Room

There are so many tools to make 3D models and digital twins, but how do they work?

Andrew Blance

Innovation Consultant

We’ve been thinking about scanning tools and VR headsets for over a year now, and we have finally made the time to begin running our metaverse meeting project! What is a meeting in the metaverse like? Is it worth it? How can you do it? Can you import your own rooms into it? In this week’s newsletter, Katie takes a look at a range of easily-accessible 3D scanning apps with the aim of trying to make realistic models of our office’s meeting rooms.

How to Scan a Room

Can you scan a room and then visit it in VR? This is the question we have been exploring over the past few weeks. Since Facebook created the metaverse, a virtual world you can visit by putting on a VR headset, there has been a lot of hype surrounding VR as a future of work. Waterstons works with a lot of clients who are intrigued by this field, such as manufacturers, architects and engineers, and who are also interested in digital twins and 3D models. I set out to investigate the feasibility of this. How easy would it be to achieve? Which tools are available? What is the experience like?

This started with exploring and evaluating the different scanning software currently available. The first step in this process is to scan the room you want to visit in VR, in my case, I was looking to scan a meeting room within our Durham office.

There are many apps available that allow you to do this and often require the use of a LiDAR camera.

- LiDAR cameras work by measuring the distance to a target using a laser, it records the time lapse between the sending of the laser and its reflection back to the camera.

- Photogrammetry is the process of taking lots of photos of a room/object and stitching these together to produce a 3D model.

- Stereo photography captures 3D images by imitating human binocular vision through the use of a stereo camera; a camera with two or more lenses, each with its own image sensor or film frame.

The cameras I used were:

- iPad Pro (11th Gen) with inbuilt LiDAR scanner.

- Intel RealSense D455 LiDAR Camera

Meta Scan

Meta Scan is an iPad app that allows you to scan a room using LiDAR, it’s main selling point is the app allows you to “Export everywhere”, but this only seems to apply if you pay for the Pro version, which is $6.99 a month. I found the scans created using this were blurry, and that there were crossovers or duplicates of objects.

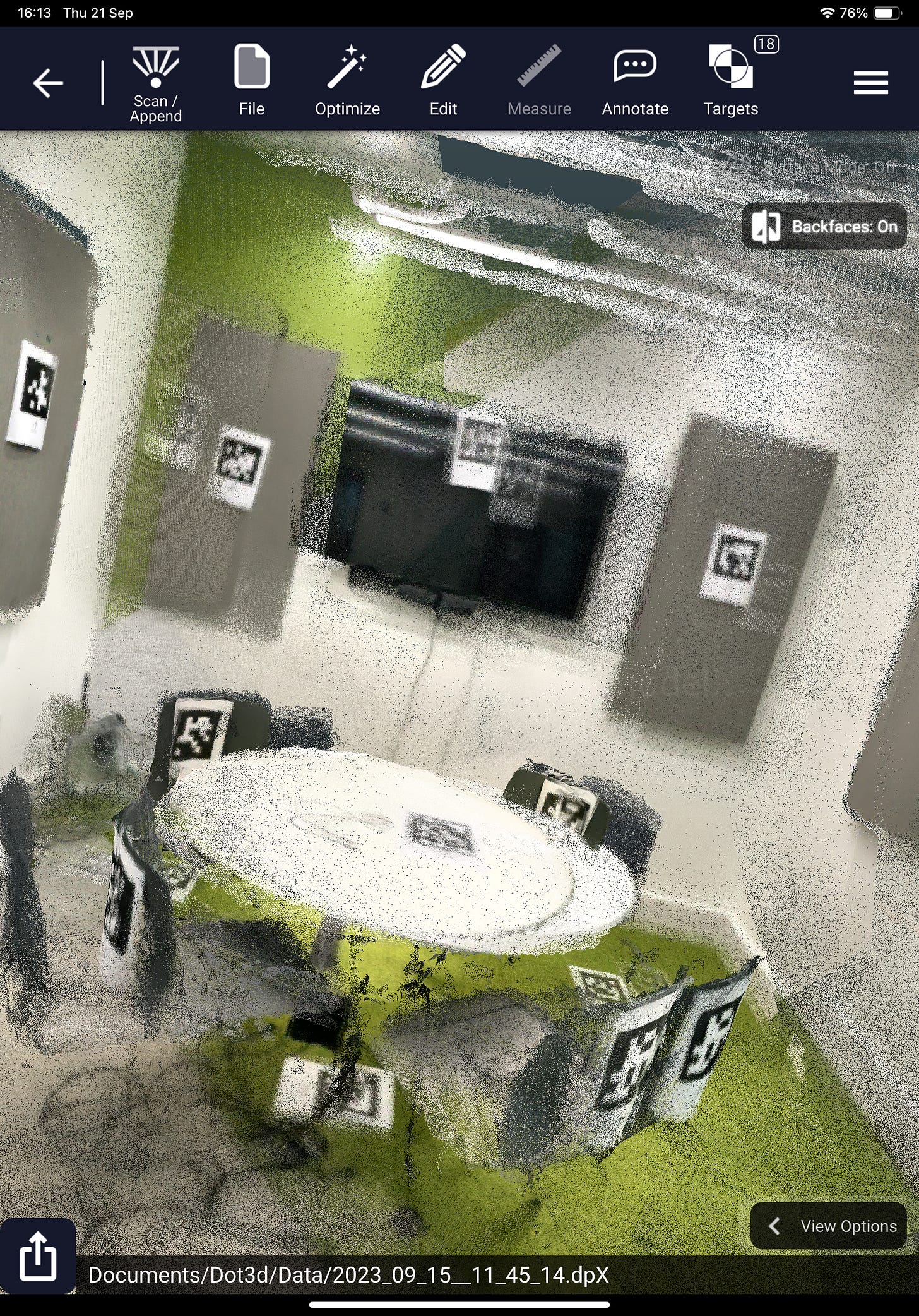

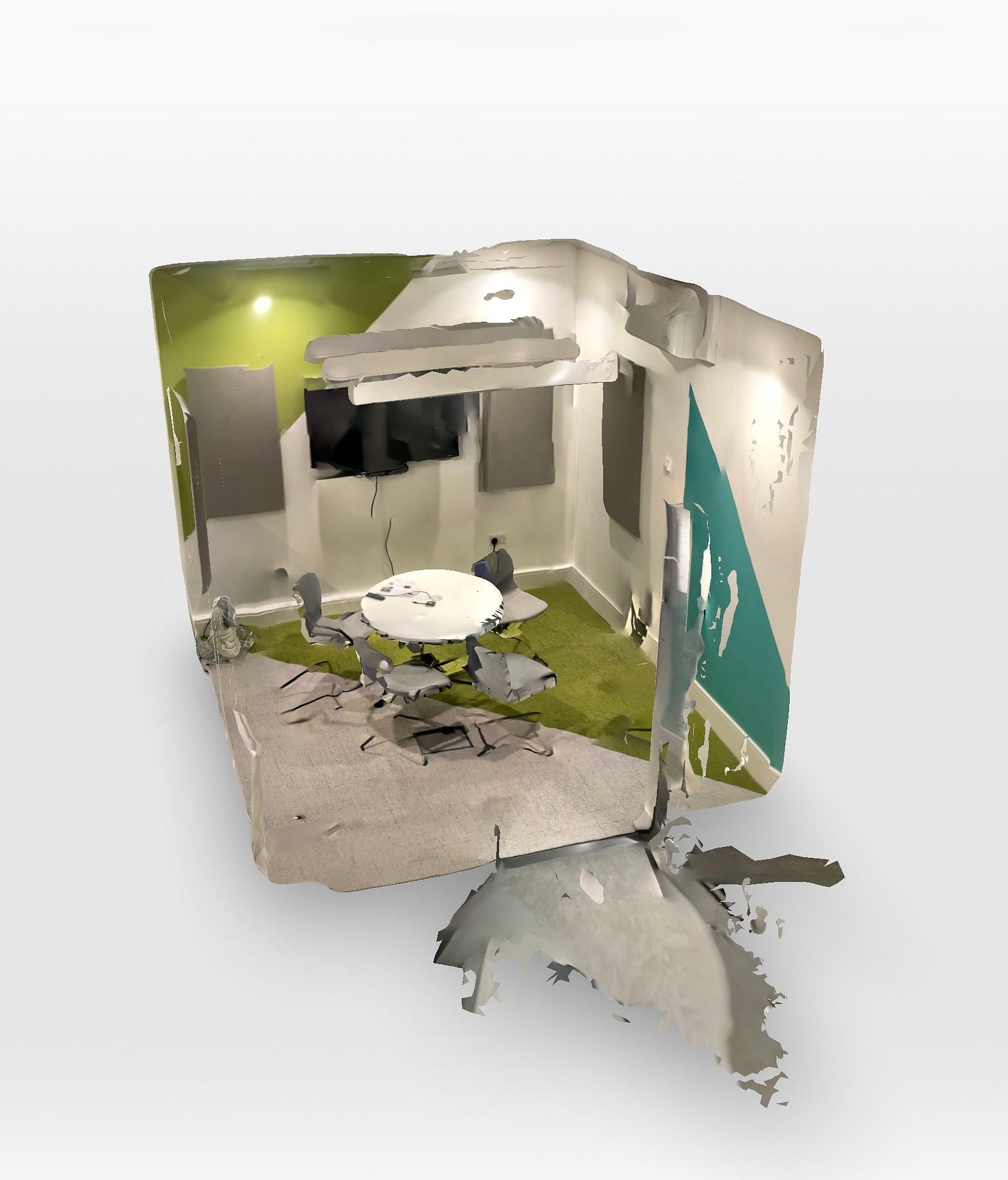

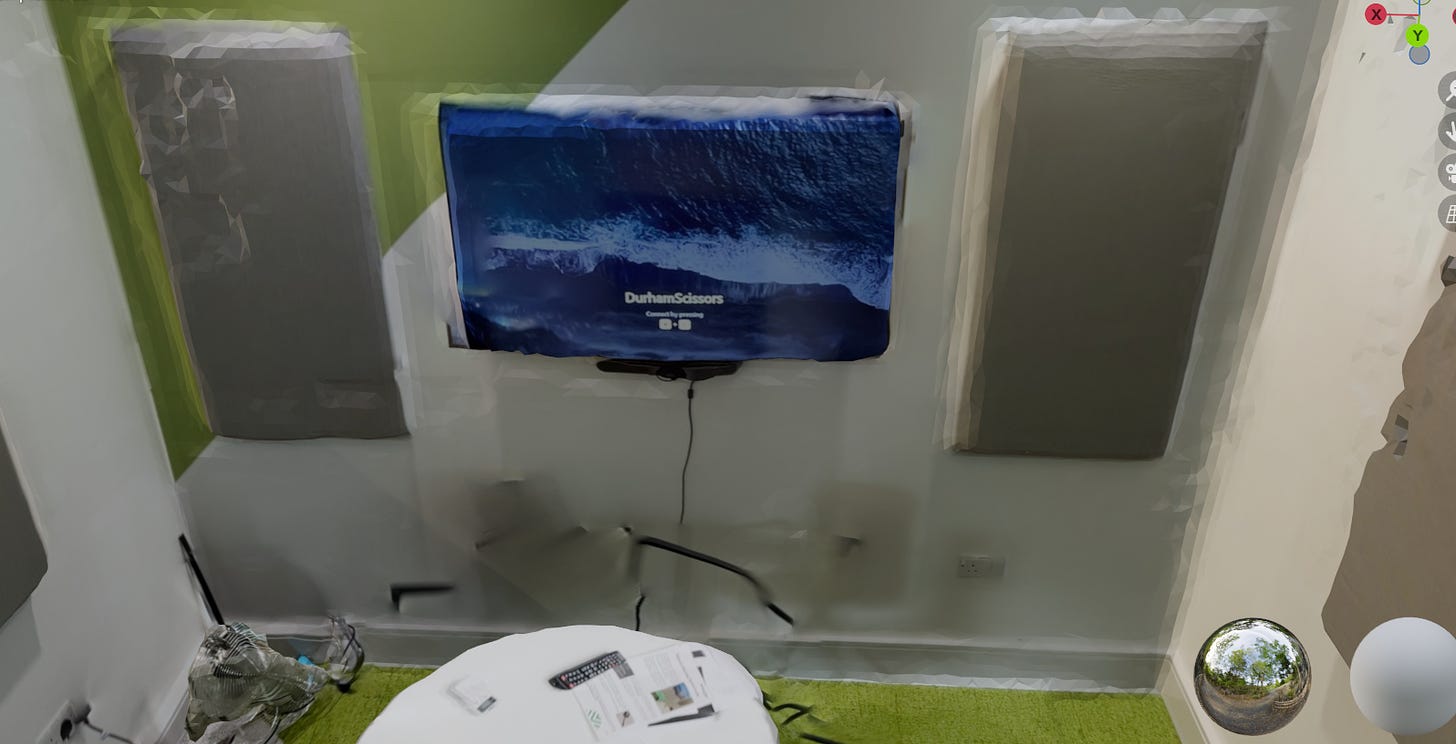

Dot3D

The second app I looked at is Dot3D, which supports both the RealSense Camera, through the use of the Windows app, as well as iPad. Both are free to use, but the iPad app requires a paid subscription if you want to export scans created using the app, which costs £49.99 a month and does not perform as well as the Realsense Camera, plus objects can often appear duplicated or distorted. While I found Dot3D was excellent for picking up details of an object that is smaller, which can then be easily exported into a mesh and then into a headset, the items within the scan aren’t interactive and require a lot of knowledge of editing software to move. The cable we have for our camera is quite short, which means having to carry your laptop around with you as you scan. In addition, often the error message “lacks enough depth” would appear, meaning that you have to realign your camera to a previous place you have scanned and go again, which can make it quite tedious.

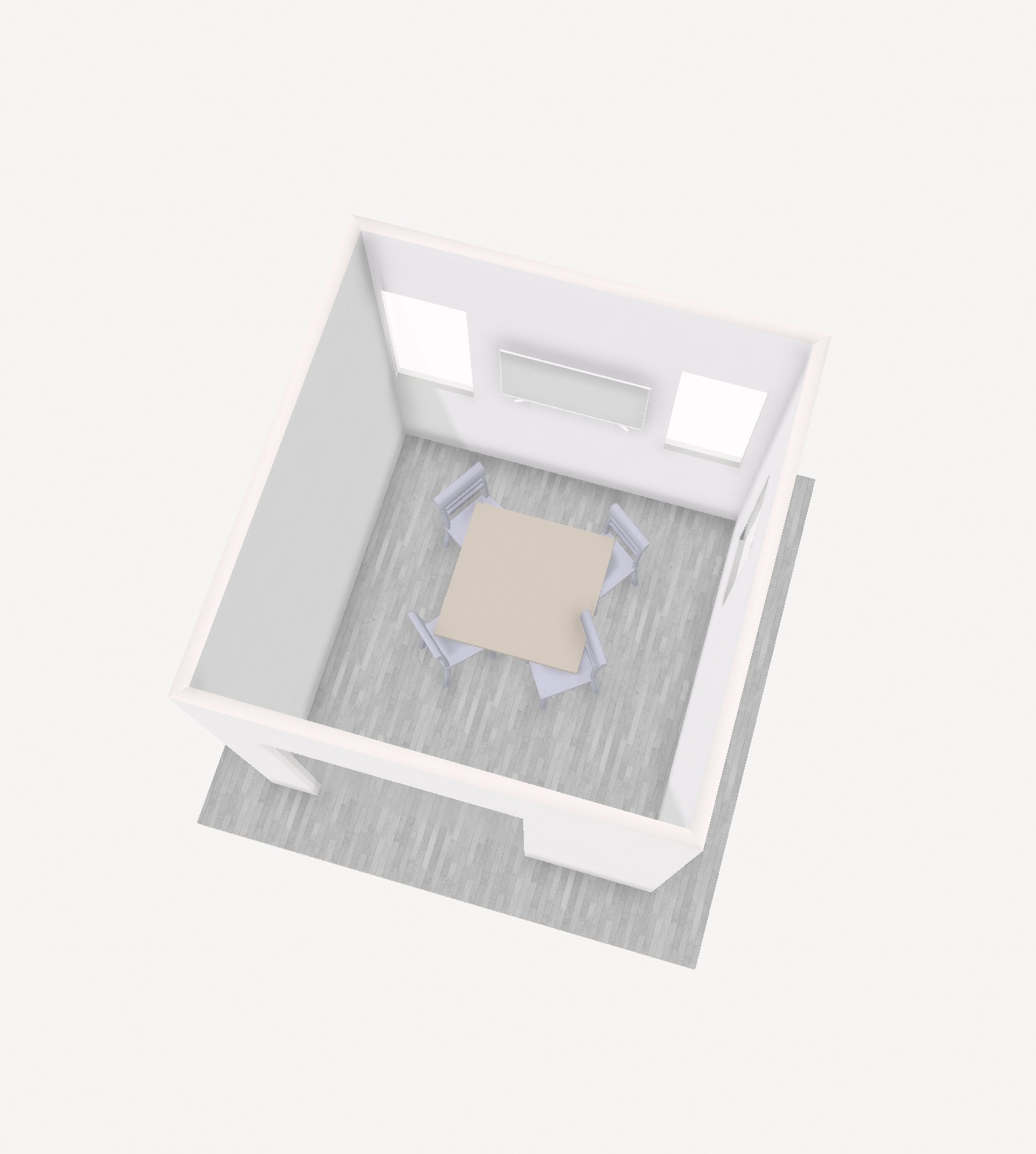

The same room, made with dot 3d

Polycam

Polycam is free and has many different options for creating room scans:

- Auto: Automatically scans the room using LiDAR – this is good at capturing detail within the room. However, a downside is that it does not seem to like shiny or thin objects.

- Manual: This involves manually taking photos of the room, to build up a picture of the room. This did not work as well, and while it picked up on furniture in the room, the legs were missing.

- 360 with AI autofill: This involves taking a 360 panoramic scan of the room, and then AI is used to fill in the top and bottom of the scan. This isn’t great and interpreted the white table in our meeting room as a white void. When you export this as an OBJ, it exports as a sphere.

- Room mapping: This is pretty cool and accurately maps out the geometry of the room. However, as previously mentioned, when you export a model, it can be quite difficult to edit or move the furniture in editing software. Polycam has a nice feature to remove any furniture from a room, making it easier to export and then later add furniture to your chosen editing software.

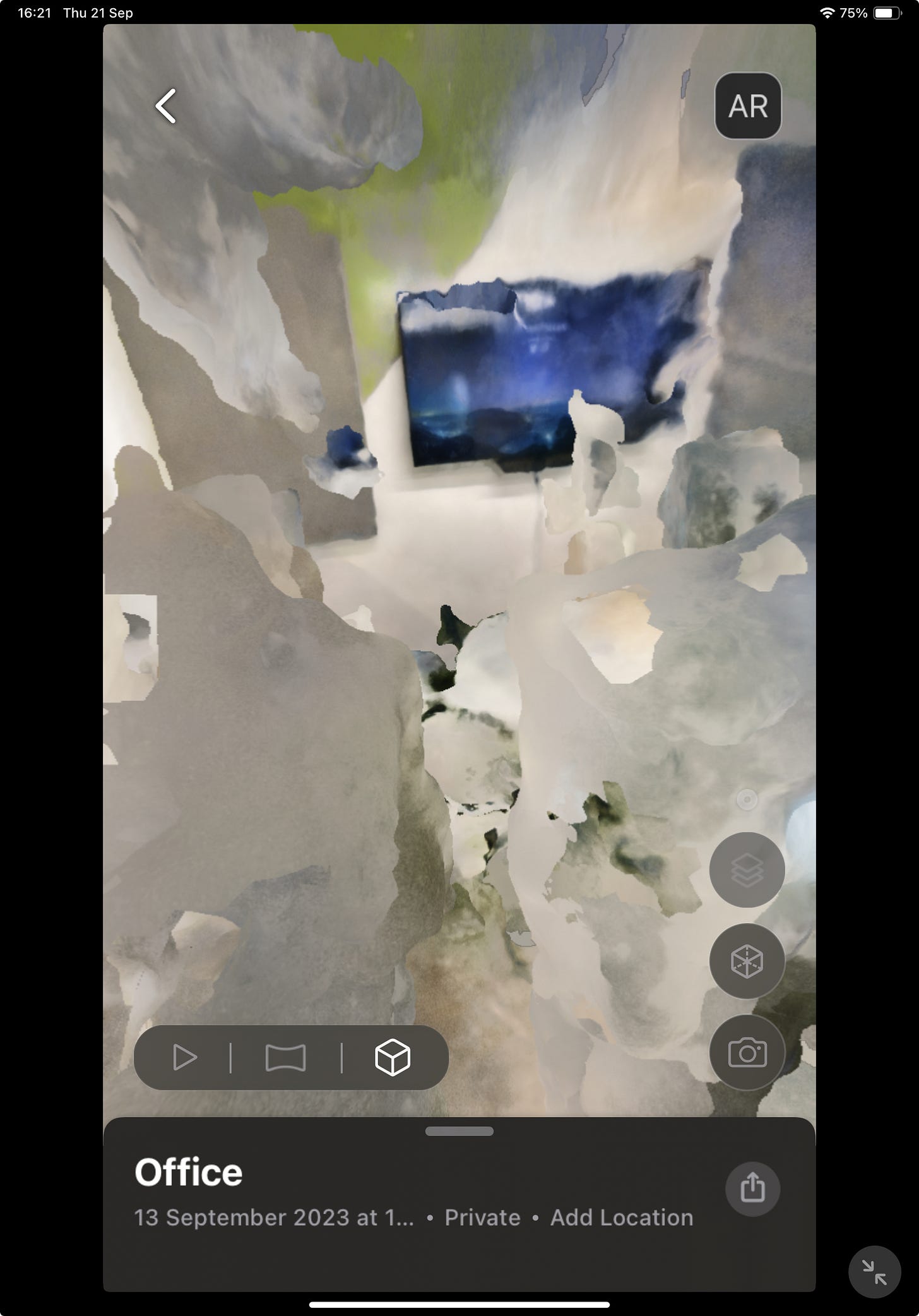

Luma AI

If you don’t have a LiDAR scanner, NERF is an AI model that allows you to combine images of a room and convert them into a mesh. Luma is a free app that allows you to do this using your mobile or tablet. On their website, they have lots of great examples of the 3D models created using their app. Here is a scan I made using the Luma app on the iPad, but be warned, it looks like it came straight from a horror movie set.

Failure to replicate the quality of the examples on their website may be due to not enough photos being taken. It is also possible that it’s better to upload a series of photos to the Luma API to experience better results. This is something I plan to explore later.

Who’s the winner?

Based on these experiments, for the rest of this project, I plan to use room scans made with Polycam, experiment with furniture removal, which could form the backbone of my VR room, which should allow me to have more interaction and customisation. I will also compare this to LiDAR scans.

Current tools available for room scanning require practice and often in-depth knowledge to achieve high-quality results. While I am still working to perfect my room scanning abilities, I would advise starting with a small LiDAR camera, like the one on some phones.

Here are my 3 top tips:

- Take time to do your scans, making sure you get all angles of the room and the objects within them.

- Polycam advises moving in an S shape as you travel around the room.

- Practice makes perfect

Happy room scanning! :)